First, the case for Biometric Authentication keeps getting stronger.

The recent Capital One credit card data breach resulted in 106 million individuals having their data compromised. And that is just the most recent incident in a long line of events that have left consumers’ personal information vulnerable to scammers. Add to that the fact that most of us practice alarming password hygiene.

According to a LoginRadius infographic, 61% of users don’t change their passwords for fear of forgetting them and a staggering 70% of millennials use one or two passwords that are easy to remember across multiple accounts. And the really scary part is that even those of us who recognize the dangers aren’t taking the appropriate actions to protect ourselves. Collective sigh.

In defense of consumers, passwords are a big pain. The challenges, risks, and attitudes combined with advances in the ease and accuracy of biometrics is opening the door for a new and better way to authenticate.

Are biometrics the silver bullet we’ve been waiting for?

Yes and no. A properly implemented biometric solution will be significantly more secure. And unlike passwords which are easily forgotten, biometrics are something we don’t have to “reset”, cannot change and are generally less frustrating. But just like any technology, biometrics are not immune to attack. Face recognition, voice biometrics, and even fingerprints can all be “spoofed” or faked. However, these attacks are preventable with the use of liveness detection to identify presentation attacks. Liveness detection can be applied to voice and face biometrics. In this article, we focus on facial liveness, what it is and why it’s critically important in the deployment of face biometrics for authentication.

Yes and no. A properly implemented biometric solution will be significantly more secure. And unlike passwords which are easily forgotten, biometrics are something we don’t have to “reset”, cannot change and are generally less frustrating. But just like any technology, biometrics are not immune to attack. Face recognition, voice biometrics, and even fingerprints can all be “spoofed” or faked. However, these attacks are preventable with the use of liveness detection to identify presentation attacks. Liveness detection can be applied to voice and face biometrics. In this article, we focus on facial liveness, what it is and why it’s critically important in the deployment of face biometrics for authentication.

What is facial liveness detection?

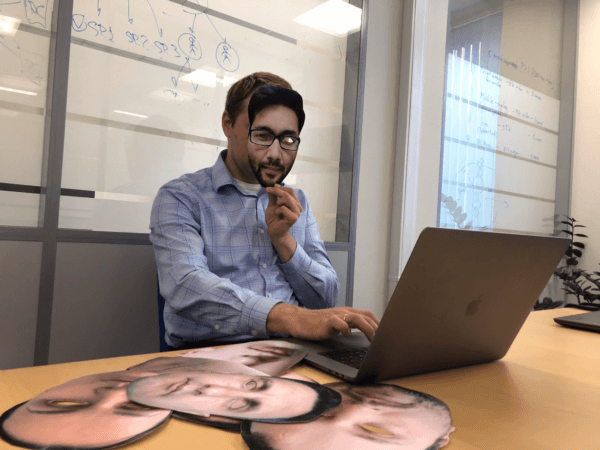

Just like it sounds, facial liveness, or presentation attack detection, determines if a face presented to a facial recognition system is that of a live person or a high-resolution photo, cut out photo, 3D mask, or video. All of these are ways to “trick” the system into thinking it’s seeing the authorized user.

Liveness detection protects us in a world where our biometric data is easily accessible through a quick Google search or on our social media channels.

Why you shouldn’t authenticate without it.

Face recognition technology only verifies a match – meaning the ability to determine if two different photos contain face data from the same person. Privacy issues aside, this technology by itself can be useful in identifying a face in a crowd.

Face recognition algorithms are not able to differentiate a ‘live’ face from one that is not live. This poses a significant threat when using face biometrics for authentication, whereby users opt-in to use the technology instead of or in addition to traditional authentication means like passwords, pins or keycards.

Facial liveness is a crucial step in the process of accurately authenticating a person with facial recognition technology when the facial recognition system is operating without human supervision, such as authentication on a mobile phone, online or during the process of remote customer onboarding (like when setting up a new bank account).

Liveness detection technology uses software and hardware-assisted approaches, and machine learning algorithms that are trained on large datasets to identify a presentation attack. It is not matching! It’s simply determining live vs not live.

Most facial liveness detection solutions rely on passive observation through software that detects such things as eye movement, lip movement or blinking. Masks, photos with eye holes cut out and videos can fool these liveness detection systems.

Some face biometric technologies use a “challenge-response” software approach in which the user is prompted to do something like turn their head or move within the proximity of the camera.

Hardware-assisted methods require a sensor and utilize technologies such as 3D and radiometric measurements (infrared).

ID R&D believes in a truly passive liveness detection approach that doesn’t require active participation by the user. It essentially operates in the background, detecting features of a spoofing attack such as edge, depth and motion detection, as well as passive observation of features like skin texture. Capturing multiple artifacts in a single frame enables a fast decision. In addition to delivering high accuracy, our passive liveness approach is transparent to fraudsters and completely frictionless for users.

Take a look at how it works.

Best Practices for Liveness Detection Deployment

In addition to deploying passive facial liveness, which we believe results in a better user experience without sacrificing any efficiency, following are some important best practices to keep in mind:

- Seek a solution that works across channels and devices — eliminating the need for users to enroll multiple times for each point of authentication.

- Deploy a facial liveness solution that stays ahead of fraudsters, using the very latest advances in AI and machine learning.

- Work with an experienced team that demonstrates expertise in the ability to train and improve algorithms through the use of large datasets, including ensuring the data is properly collected and labeled.

- Partner with a team that is on the cutting edge of the technologies and possible vulnerabilities. Ensure a vendor that can quickly respond to the new threats, adjust the algorithms and even use cases from production to re-train and calibrate the system so that it works accurately with pictures from your specific domain.

Of course, like any system, understand all the points of vulnerability in an overall face recognition solution. For instance, ensure biometric templates are encrypted (similar to the way passwords are hashed) and unusable to anyone who might gain access to them.

What next?

Once you verify that a user is indeed a real live person, you can proceed to determine if they are the right authenticated person using face biometric software. It’s a win-win: a more efficient and secure authentication method for the enterprise and a pleasant and frictionless experience for the user.

ID R&D’s passive facial liveness detection product, IDLive™ Face, is available as an SDK for use with any face recognition system. If you would like to learn more, get in touch.